QlikView (QV) is a visual dashboarding tool capable of visualizing large datasets with QlikTechs unique in-memory architecture. In memory technology enables a new and interactive way of navigating and understanding relationships in datasets. Analysis paths for dashboards don’t have to be conceived beforehand in a design process but arise on-the-fly in the data. The term Business Discovery has been introduced by QV to emphasize the new possibilities as opposed to more traditional BI tools.

QV is able to handle exceptionally large dataset, thanks to its native data compression (10x) technique and the scalability of RAM memory. Sometimes it can become a problem just getting the large datasets in QV! Whereas QV has some nice built-in features for parallel (multi-threaded) loading from relational database sources (RDBMS) it is less efficient to load from text formats like CSV because of parsing and type conversions. Very large flat file data sources (up to 100GB) can take well over an hour to load. It does not make sense to introduce an additional RDMBS layer just to enable the multi-threaded bulk loaders of QV. Moreover, in many organisations flat files are the preferred way of exchanging data. Fortunately now there is a solution for loading flat files into QV, fast.

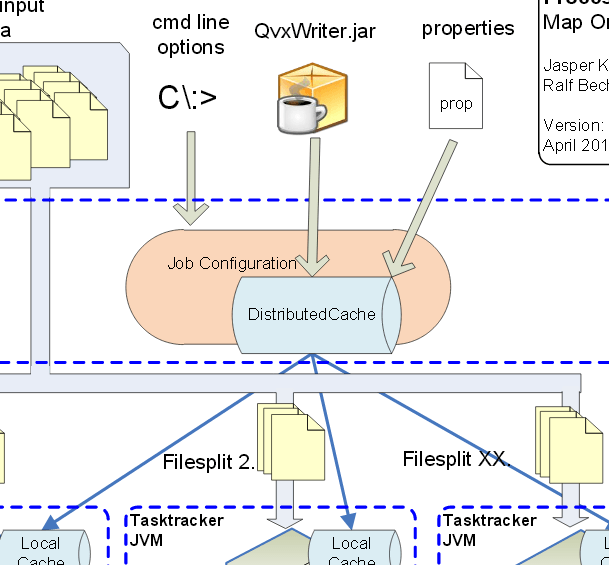

In 2011 QlikTech released some documents that laid out the structure of a native but open file format named QVX. Data in QVX format can be loaded up to 3 times faster because the data is already preprocessed in a way QV understands. QVX is a semi-binary format with a header describing fields in XML followed by the data itself in binary format. In January 2012 Ralf Becher of TIQ Solutions wrote a Java based library to convert CSV files to QVX format. Along with the open-sourced version TIQ also sells a multi-threaded version to accommodate for very large datasets. Remember, the rising volume of the datasets in dashboards is the outset of the QVX format! Now this has been taken a step further.

Jasper Knulst a Hadoop specialist working for Incentro, has collaborated with Ralf to refactor his QVX converter to be executable on Hadoop as a MapReduce job. This means QVX files can be generated directly as Hadoop output, leveraging the extreme processing power of Hadoop, which is horizontally scalable by design.

The joint Hadoop QVX converter (HQC) will be announced this week especially for Qonnections 2012. The HQC will serve organisations well that employ both QV and Hadoop. On the one hand large datasets can be passed through a Hadoop MR job, using it as a QVX converter on steroids. On the other hand analytic or ETL jobs on Hadoop could produce output data in QVX format directly. Incentro and TIQ Solutions are both providing professional services for HQC implementations.